|

Google Associate Cloud Engineer Exam Page10(Dumps)

Question No:-91

|

Your company's infrastructure is on-premises, but all machines are running at maximum capacity. You want to burst to Google Cloud. The workloads on Google

Cloud must be able to directly communicate to the workloads on-premises using a private IP range. What should you do?

1. In Google Cloud, configure the VPC as a host for Shared VPC.

2. In Google Cloud, configure the VPC for VPC Network Peering.

3. Create bastion hosts both in your on-premises environment and on Google Cloud. Configure both as proxy servers using their public IP addresses.

4. Set up Cloud VPN between the infrastructure on-premises and Google Cloud.

|

Answer:-4. Set up Cloud VPN between the infrastructure on-premises and Google Cloud.

|

|

Question No:-92

|

You want to select and configure a solution for storing and archiving data on Google Cloud Platform. You need to support compliance objectives for data from one geographic location. This data is archived after 30 days and needs to be accessed annually. What should you do?

1. Select Multi-Regional Storage. Add a bucket lifecycle rule that archives data after 30 days to Coldline Storage.

2. Select Multi-Regional Storage. Add a bucket lifecycle rule that archives data after 30 days to Nearline Storage.

3. Select Regional Storage. Add a bucket lifecycle rule that archives data after 30 days to Nearline Storage.

4. Select Regional Storage. Add a bucket lifecycle rule that archives data after 30 days to Coldline Storage.

|

Answer:-4. Select Regional Storage. Add a bucket lifecycle rule that archives data after 30 days to Coldline Storage.

|

|

Question No:-93

|

Your company uses BigQuery for data warehousing. Over time, many different business units in your company have created 1000+ datasets across hundreds of projects. Your CIO wants you to examine all datasets to find tables that contain an employee_ssn column. You want to minimize effort in performing this task.

What should you do?

1. Go to Data Catalog and search for employee_ssn in the search box.

2. Write a shell script that uses the bq command line tool to loop through all the projects in your organization.

3. Write a script that loops through all the projects in your organization and runs a query on INFORMATION_SCHEMA.COLUMNS view to find the employee_ssn column.

4. Write a Cloud Dataflow job that loops through all the projects in your organization and runs a query on INFORMATION_SCHEMA.COLUMNS view to find employee_ssn column.

|

Answer:-4. Write a Cloud Dataflow job that loops through all the projects in your organization and runs a query on INFORMATION_SCHEMA.COLUMNS view to find employee_ssn column.

Most of the people answer option (1) as there choice

|

|

Question No:-94

|

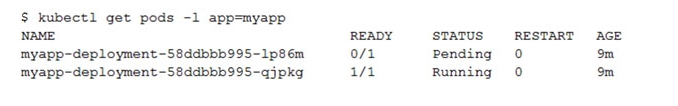

You create a Deployment with 2 replicas in a Google Kubernetes Engine cluster that has a single preemptible node pool. After a few minutes, you use kubectl to examine the status of your Pod and observe that one of them is still in Pending status:

What is the most likely cause?

What is the most likely cause?

1. The pending Pod's resource requests are too large to fit on a single node of the cluster.

2. Too many Pods are already running in the cluster, and there are not enough resources left to schedule the pending Pod.

3. The node pool is configured with a service account that does not have permission to pull the container image used by the pending Pod.

4. The pending Pod was originally scheduled on a node that has been preempted between the creation of the Deployment and your verification of the Pods' status. It is currently being rescheduled on a new node.

|

Answer:-2. Too many Pods are already running in the cluster, and there are not enough resources left to schedule the pending Pod.

Most of the people choose option (2) as and answer

|

|

Question No:-95

|

You want to find out when users were added to Cloud Spanner Identity Access Management (IAM) roles on your Google Cloud Platform (GCP) project. What should you do in the GCP Console?

1. Open the Cloud Spanner console to review configurations.

2. Open the IAM & admin console to review IAM policies for Cloud Spanner roles.

3. Go to the Stackdriver Monitoring console and review information for Cloud Spanner.

4. Go to the Stackdriver Logging console, review admin activity logs, and filter them for Cloud Spanner IAM roles.

|

Answer:-2. Open the IAM & admin console to review IAM policies for Cloud Spanner roles.

Most of the people choose option (2) as an answer

|

|

Question No:-96

|

Your company implemented BigQuery as an enterprise data warehouse. Users from multiple business units run queries on this data warehouse. However, you notice that query costs for BigQuery are very high, and you need to control costs. Which two methods should you use? (Choose two.)

1. Split the users from business units to multiple projects.

2. Apply a user- or project-level custom query quota for BigQuery data warehouse.

3. Create separate copies of your BigQuery data warehouse for each business unit.

4. Split your BigQuery data warehouse into multiple data warehouses for each business unit.

5. Change your BigQuery query model from on-demand to flat rate. Apply the appropriate number of slots to each Project.

|

|